Date:

By Exactpro's Iosif Itkin, co-CEO and co-founder, Marina Kudriavtceva, SVP, Technology, Mikhail Odintsov, Capital Markets Division Lead, Alyona Bulda, SVP, Technology and Daria Degtiarenko, Senior Marketing Communications Manager

Introduction

Exactpro is an independent provider of AI-enabled software testing services to financial organisations globally. Our client network spans but is not limited to 60% of the Top 10 and 60% of the Top 20 global exchange groups. This article is based on an internal discussion that we had within the technology management team.

We recently reviewed several software testing engagements. Each of them stretched over a substantial period of time. Each of them produced a thorough regression testing capability for a large complex system. In some cases, the Exactpro team performed a knowledge transfer to the client’s team following the completion of the project. In some cases, we were asked to leverage the test artifacts produced by somebody else. And, finally, we also have engagements where the regression testing capability was built by ourselves – but by our former selves several years back – and now our current selves are responsible for maintaining it.

These engagements and their outputs have a material impact on our own business and on our partners’ operations, so we’ve decided to share some guidelines and the takeaways from our discussions.

Regression testing is where bugs come back to life

A regression testing capability is the competence to detect unexpected side effects of the changes introduced to a software product or systems. More generally, regression testing is ‘any testing that is motivated by risk due to changes made in a previously tested product’ [1]. It helps to decrease the probability of losing the value that was previously present in the software. A regression testing capability stands on three pillars – Processes, Platforms and People.

In ‘Taking Testing Seriously’, James Bach and Michael Bolton point out that time constraints can hamper comprehensive regression testing after every build, so it may end up being ‘shallow’ and based on ‘a certain faith that good primary testing was performed and that the programmers are trying to be careful’. Yet, in relation to specific risks, regression testing ‘morphs into primary testing’ [1].

In this article, we will look at regression testing from three different perspectives:

- Psychological

- Technical

- Economical

The psychological perspective: loss aversion bias

From the perspective of psychology, owning something as substantial as a regression testing library makes stakeholders susceptible to the loss aversion bias (coined and popularised by Daniel Kahneman and Amos Tversky in Prospect Theory [2]). The loss aversion bias is a cognitive bias that makes people more risk-averse when facing potential losses than when having a chance to acquire equivalent gains. Kahneman and Tversky demonstrated that ‘losses are typically twice as powerful psychologically as gains’.

Although biases can be considered a reflection of irrational thinking – like holding onto losing investments too long or avoiding beneficial risks – they are undoubtedly an adaptive evolutionary mechanism. They have helped us to make protective decisions in situations of uncertainty. Investing in fixed-income securities is an example of loss aversion at work. It is targeted at securing the assets you have, rather than potentially risking to have nothing at all, if a more aggressive strategy had been followed.

With a regression testing library, adhering to an existing test suite that’s been historically developed helps stakeholders stay on the safe side and mitigate the risks in the comfort of using the asset they had already invested in. The stakeholders’ need to feel safe is very important, it has kept them and the business afloat. However, the ability to assess the current state of a test library and how much of an asset it presents to any given business is a separate point of discussion that we will make shortly. But for now, let’s move on to the next regression testing aspect – technology.

The technical perspective

All software testing is model-based testing. All tests are based on models [3]. Sometimes these models are implicit and exist in software testers' minds. Sometimes these models are explicit and formally defined.

Regression testing is based on the assumption that, for many scenarios, the new version of the software behaves very similarly to the previous version. The model of the system under test is the previous version of the system.

Overwhelmed by overfitting?

In machine learning terms, a regression testing library tends to overfit to the data points collected from the previous version of the software. As with most machine learning models, the success of regression testing depends on the availability, relevance, diversity, interpretability and accuracy of the data.

If access to the previous version of the software is still available, it can be used as a generative model to produce additional data points. To effectively leverage this generative process, it is crucial to preserve the surrounding environment and configuration.

All models are wrong, but some are useful [4]. And that brings us to the third regression testing aspect we are looking into – the economic perspective.

The economic perspective

Usefulness

Software testing is an information service. The information we provide is the result of a deep, objective and comprehensive exploration of the system under test, its components and their interdependencies. Our core efforts on any given project are focused on creating an end-to-end test library and fine-tuning it to a client system in a way that allows us to achieve the most efficient test coverage with the least time, hardware and software resources spent on test execution. The product of software testing is a test library – it is essentially a subset of tests that has been iteratively selected as the one having the highest probability of discovering potential defects. This subset is also a powerful regression testing tool that can be used for assessing the quality of the following versions of the software.

Regression testing is useful as long as it is capable of providing information about the system under test. A regression testing capability is a large-scale technology object that is aimed at bringing value, while being almost intangible (except its presence in code and data, supporting processes and people’s skillsets). But the strategic value it brings testifies to it being a substantial material asset: it embodies the organisational knowledge about the system and supports long-term growth, stability and auditability; it is also a collaborative, cross-functional effort with a high cost of replacement. And every asset needs to be managed.

The value of ownership

As an asset, a regression testing library can be actively maintained up to date. This would require constantly enriching it with new system functionality to match the development of the system itself. It could also be replaced with another asset – a different regression library. It can also be left in its current state, but that would imply that it will gradually depreciate. Perhaps, the goal is to eventually write it off; in that case, keeping it unchanged would be a logical scenario.

Either managerial choice is acceptable, as long as it’s coherent with the next actions and business goals. Examples of incoherent actions include considering that regression testing brings value but retiring the library nonetheless or not keeping it up to date with the system’s development. Conversely, there may be plans to phase the library out, but ongoing improvements continue despite these plans.

There may also not be a clear strategy as to the asset, or several strategies may intermingle.

To economically assess the value of regression testing, it is crucial to be able to differentiate between a test library that is alive and the one that is dead – especially if the strategy is to maintain a working test library.

Ways to assess if one’s regression testing capability is alive

When it comes to dealing with a complex system, the answer may not be clearcut. Everything that complex exists on a spectrum. For example, it can be mostly dead or almost alive. It can be evolving or decaying.

So here is our guidance on how to find out the current state of a test library and how to drive it towards a desired condition. These points can be relied on to assess the state of a regression test suite at any stage of the system’s lifecycle.

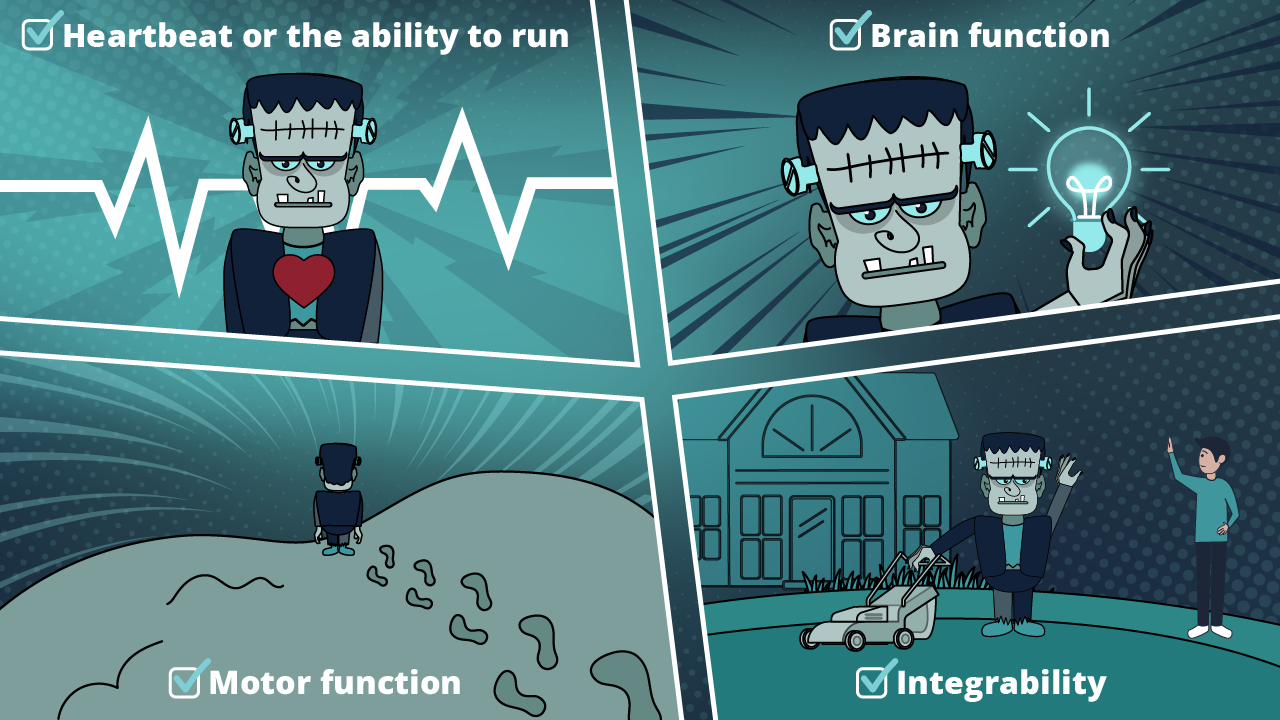

- Heartbeat or the ability to run

The frequency of use and the ability to run on an ongoing basis. If a test library exists as a set of artifacts and not as a continuous stream of events, most likely, it is dead. While regression testing itself is motivated by a change to a previously tested product, maintaining a heartbeat might be a good thing even in the absence of change. - Brain function or the ability to evaluate results (the efficiency of bug detection and description)

The test library supports issue detection with a certain level of consistency and allows teams to interpret the positive as well as the negative results – a sign of its cognitive function being intact. The intent of any software testing is not to confirm things, but to find bugs. - Motor function or the ability to move

The test library can be reconfigured, it can be moved, deployed on different servers, and its transferability is not limited to a single environment – a sign of robust mobility. - Integrability or the ability to integrate into the required parts of the surrounding technology ecosystem

The test library can be connected to databases and other upstream and downstream systems for various purposes integral to comprehensive testing. It is an important sign of a system’s viability.

- Neuroplasticity

The test library has functional flexibility in which obsolete functions of the existing test library can be reassigned to other tools and/or broken up into smaller processes. Structural flexibility allows testers to replace internal parts that are no longer alive with new, robust functionality. The primary method of assessment is evaluating every part of the system for potential replication and eventual replacement. Notably, all three aspects of the People, Processes, Platforms should be taken into account. - The ability to grow and stay fresh

Once the test library is considered alive, testing teams can move on to simplifying and optimising its architecture, as well as scaling it, if needed. “The high art of regression testing is keeping it fresh“ [1].

The two key obstacles for the design and implementation of a good strategy are a failure to face the challenge, avoiding or ignoring the core problem that needs to be addressed, and the unwillingness or inability to choose – a refusal to make hard trade-offs [5].

We hope our Halloween checklist can be handy and will help you to carve your own path. Please let us know your thoughts.

References

- Bach, J., Bolton, M. (2025) Taking Testing Seriously: The Rapid Software Testing Approach. Wiley, Kindle Edition, p.159.

- Kahneman, D. and Tversky, A. (1979) Prospect Theory: An Analysis of Decision under Risk. Econometrica, 47(2), pp.263–291.

- Kaner, C., Bach, J. (2008). Black Box Software Testing. Cem Kaner & James Bach.

- Box, G., E. P. (1976) Science and Statistics. Journal of the American Statistical Association, 71(356), pp. 791-799.

- Rumelt, R. P. (2011). Good strategy, bad strategy: The difference and why it matters. Crown Business.