Introduction

Testing in Post Trade is complicated by the high volume of transaction data, a high number systems and parties involved, as well as the need for strict regulatory compliance. The latter includes compliance with the settlement cycle and other timeframes inherent to the post-trade systems lifecycle. Software testing in post trade helps ensure the accuracy, efficiency, and compliance of financial transactions after their execution. Exactpro’s test frameworks are fine-tuned to post-trade system specifics and allow for efficient quality assessment of various kinds of their local and global implementations and settlement cycle standards. This case study can be used as reference material showcasing Exactpro’s functional and non-functional testing capabilities for post-trade technology.

Delivering Large Post-Trade Initiatives: Key Challenges

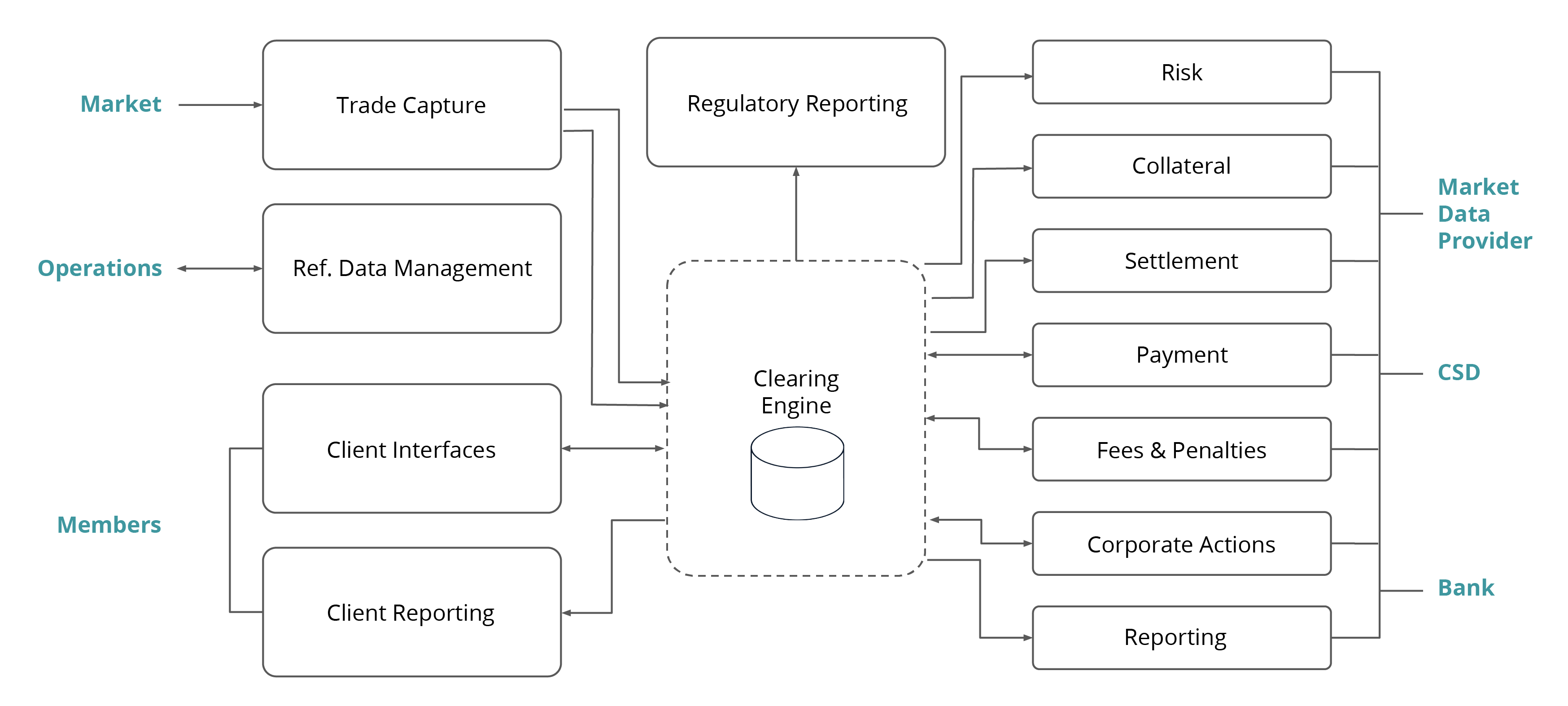

A clearing system has highly complex features requiring a high degree of accuracy and skilled resources:

- reference data;

- risk management;

- schedule (explained further).

There are also external parties that contribute to the complexity:

- markets;

- participants with different types of connections;

- settlement and payment systems;

- market data providers, and interventions from the operations team.

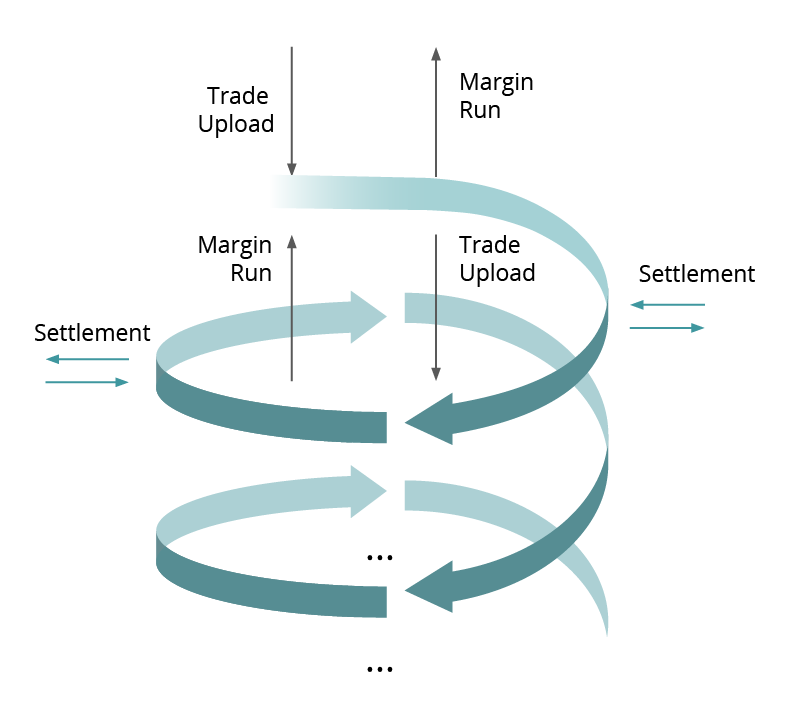

A clearing system runs some routine processes repeated every day, in a predefined order and at predefined times:

- Trade upload;

- Smart computations;

- Settlement sessions;

- Collateral uploads;

- Reporting, etc.

The processes are dependent on each other. There are also several data inputs into the system that are kept in the system and present an additional challenge.

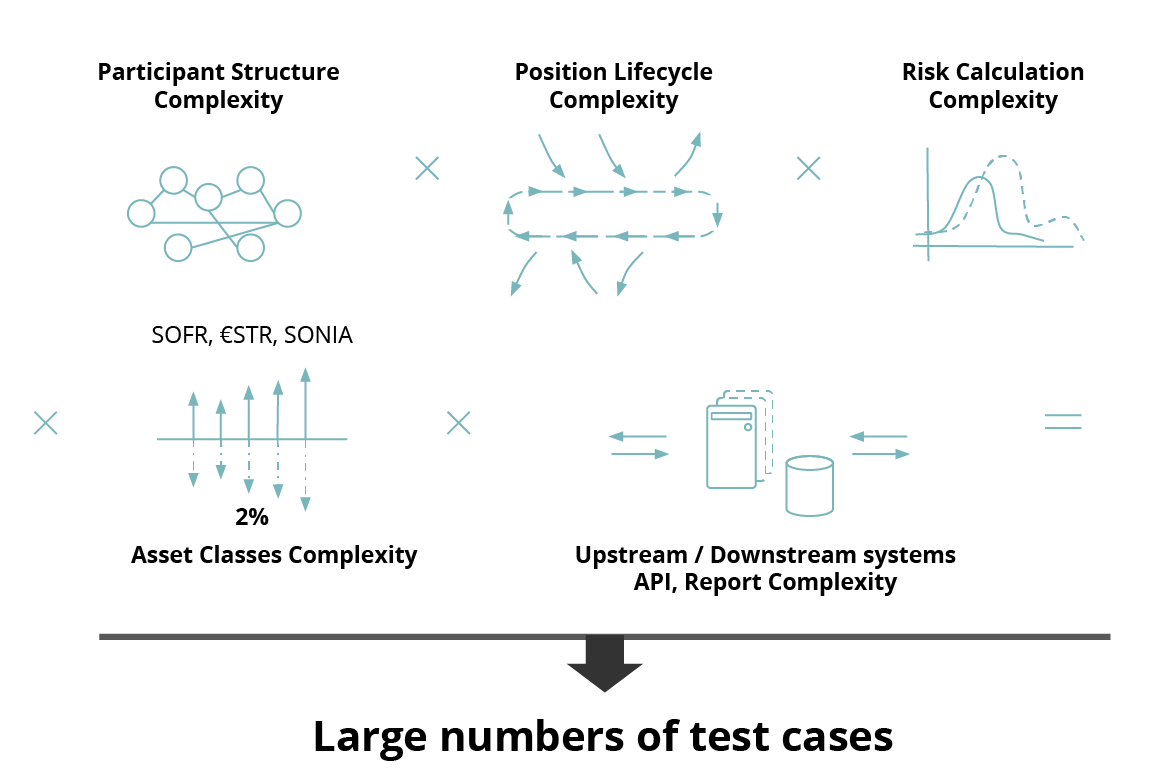

- There is a multitude of components in modern complex post-trade infrastructures;

- Upstream and downstream system dependency;

- The participant structure is very complex;

- Trade/Xfer/Position/Account life cycle;

- The number of asset classes may vary;

- The complexity of the Risk calculation process;

- Access via a set of API endpoints.

The challenges and their parameterizations lead to a significant number of test scenarios.

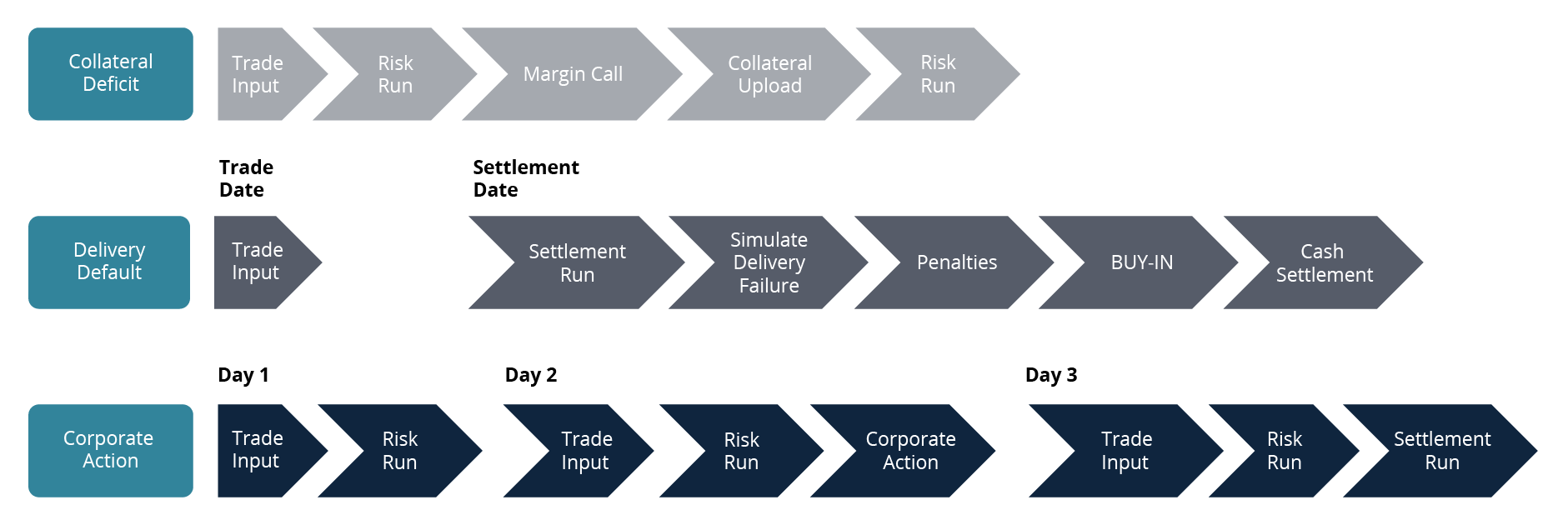

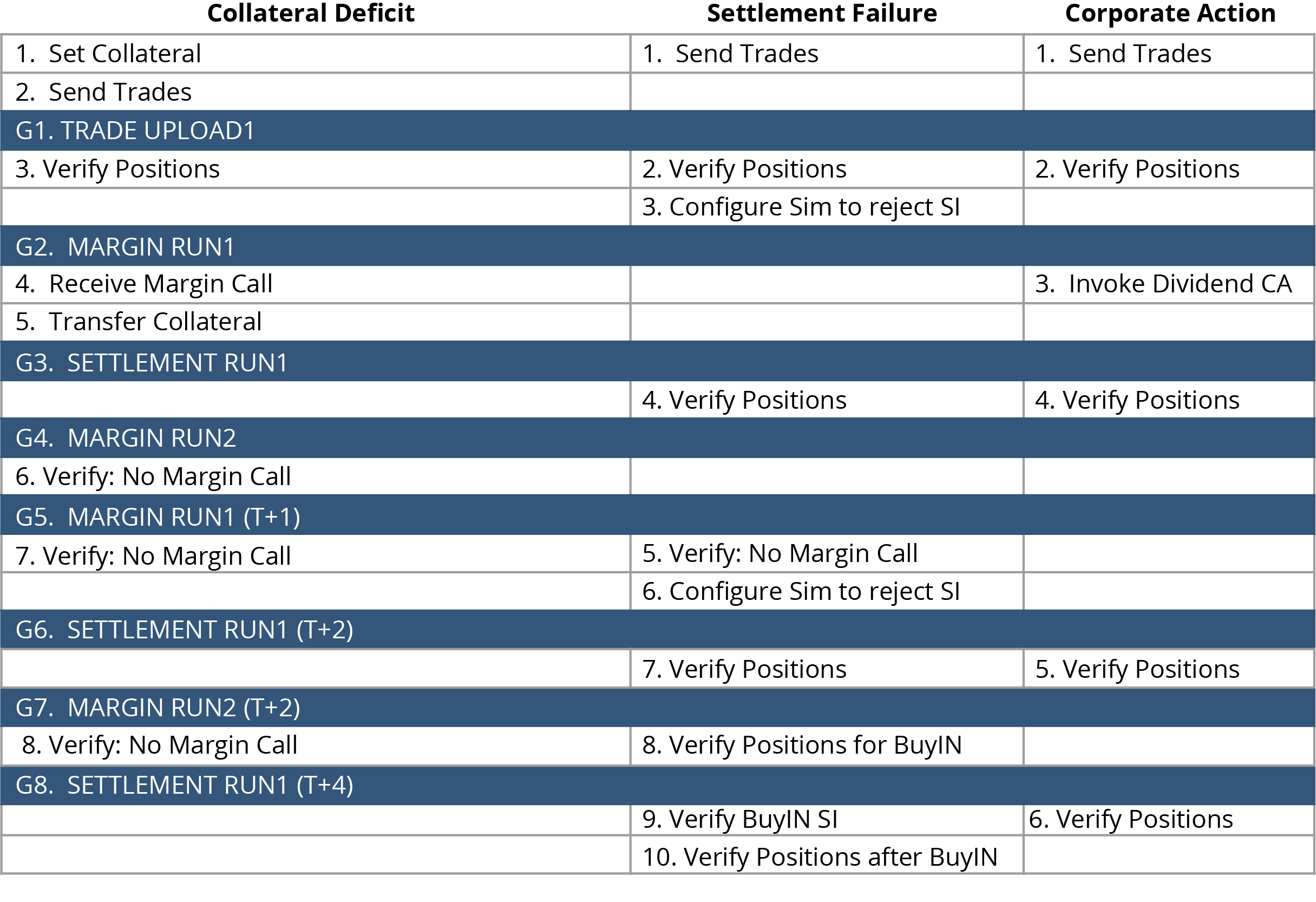

Project Requirements: Collateral Deficit, Delivery Default, Corporate Action Testing Scenarios

Case study #1: Collateral Deficit

- Trades need to invoke the initial margin call;

- Margin computations are checked;

- The inputs from the participants that deliver collateral to cover the risk exposure by CCP are simulated;

- Additional computations with the regulated collateral are done.

Case study #2: Delivery Default

- Multiple-day scenarios starting with a trade upload and continuing with settlement during the next days;

- Within this time, various inputs from settlement systems are simulated, where one participant fails on these positions and another one settles, etc.;

- Processes that happened after the settlement period took place are checked.

Case study #3: Corporate Action

- Executed over several days;

- Starts with a trade upload;

- Need to verify that corporate actions are only applied to the positions that are eligible;

- Need to check that further computations take the new and original positions into account properly.

Addressing complexity: Testing Complex Multistep Scenarios

There is a need for testing multi-step and multi-day scenarios, there could also be a need to test several scenarios at the same time. Our solution includes using an automated test tool which allows running several scenarios, organizing the test steps in batches and aligning the test schedule with the system schedule.

Our test tool allows us to execute all the test steps in a predefined order, which facilitates aligning the testing order with the events in the clearing system.

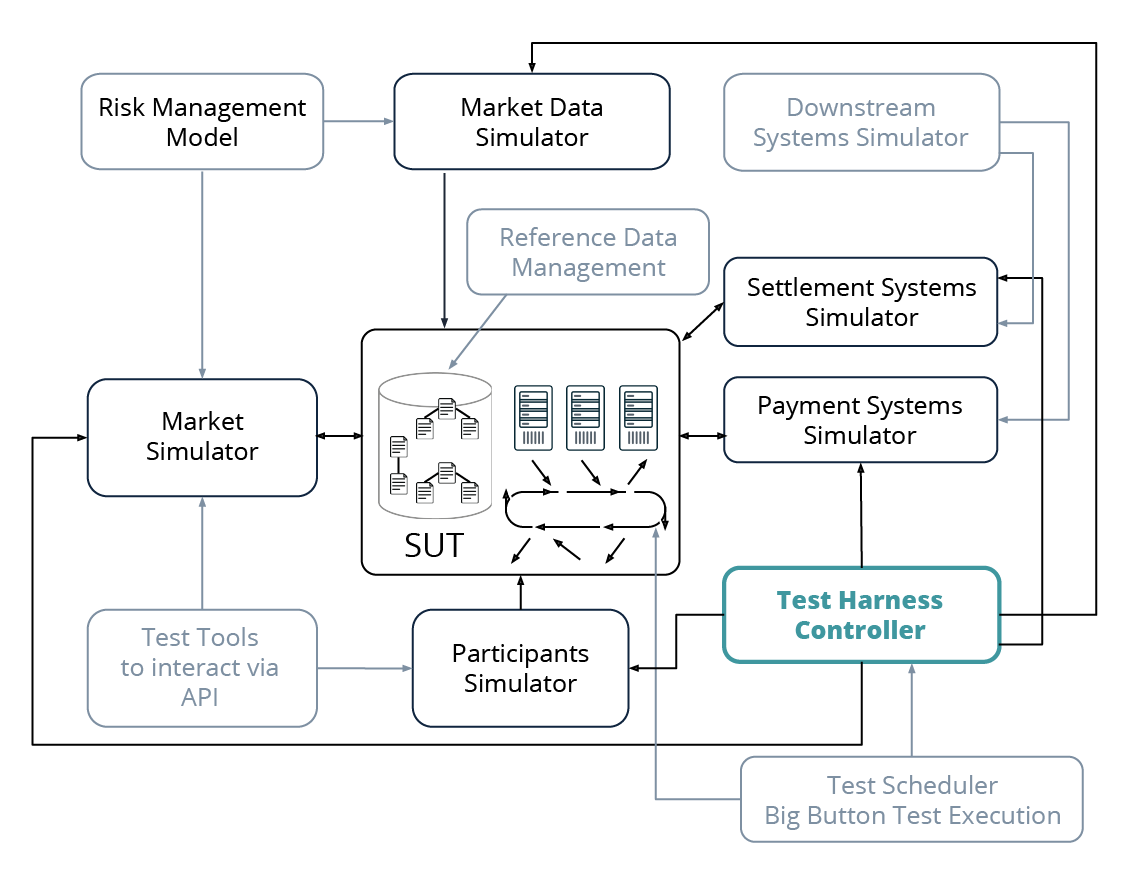

Simulators are developed and integrated with the test harness which controls both the simulators and the execution of test cases.

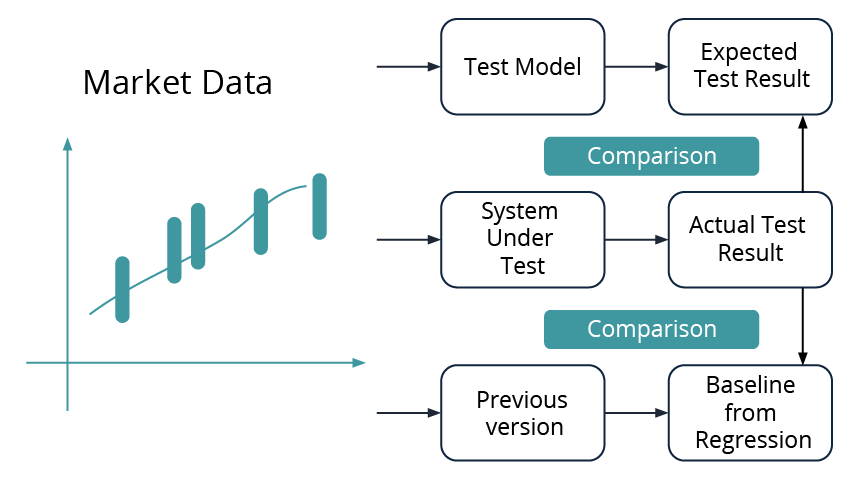

Addressing Complexity: Testing Risk Calculation Algorithms

- Testing of this area is a challenge due to the fact that the underlying mathematical concepts are quite complex and large volumes of data are used.

- One solution is to test a model which is based on simplified assumptions, but replicates the algorithms and the calculations done by the system, and compare the actual results of the system under test with the expected test results computed by the model.

- Another solution is to define a reliable set of test results from testing the previous version, define a baseline from regression and compare it with the results that come from testing the system under test.

Exhaustive Test Coverage with a Substantial Number of Test Scenarios in a Regression Cycle

- We need to run a substantial number of test scenarios AND also run them in an appropriate time frame;

- Our approach is to create different test cases and test tools which are capable of running them;

- Further, we should automate the test cases by using the tool, control the test environment and make sure the test enviroment is in a state that is appropriate to run regression tests in;

- Finally, the automated regression is run in what we call “The Big Button” mode.

Addressing Complexity: System Modelling

We implement an algorithm that is based on the same requirements as a SUT algorithm.

The model in the test harness takes the same data for calculation as the SUT algorithm and predicts its calculations. Both results are compared and shown to users in a test run report.

The approach allows to run the validations against any environment with any data and it is suitable for functional testing, performance and resilience testing.

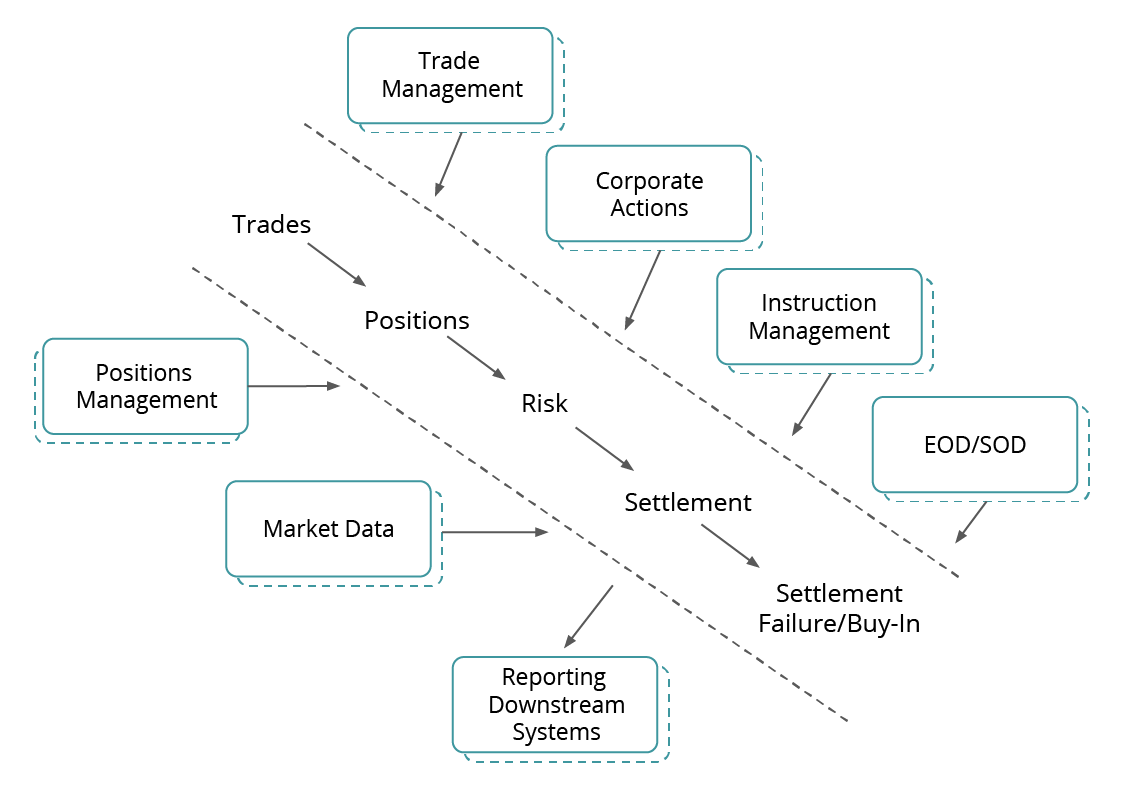

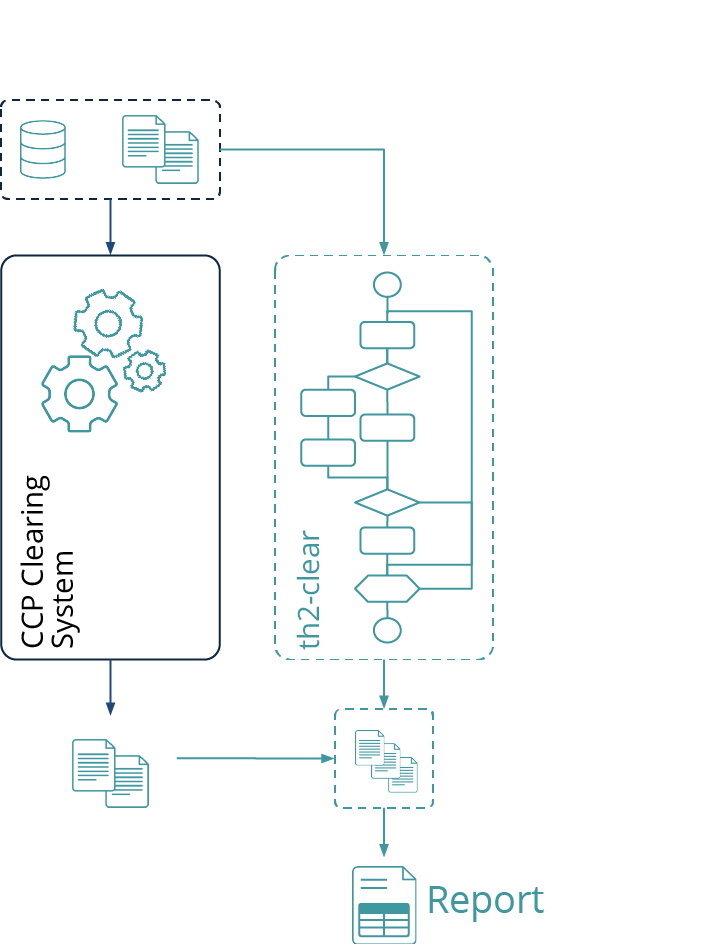

Delivering Large Post-Trade Initiatives: A Holistic Integrated Automated Test Solution

- Simulators are developed as a part of the test harness. Understanding the upstream and downstream systems and having the format and layouts of the interim protocols to those apps allows to emulate these streams and to continue the test design. This approach provides flexibility and independence from the availability of the surrounding systems.

- The test harnesses are configured to support APIs to mimic participants’ activities, market updates, other processes.

- Market data streams are defined by avoiding chaotic updates and replacing them with emulation updates. The emulation updates contain predefined datasets, so QA can rely on this “controlled” data; and design the expected behavior based on it.

- Test harness controllers are created, especially when the system lives very complex daily, weekly and monthly cycles.

- Test schedulers are developed to incorporate all end-to-end test scenarios into one test library run – “The Big Button.”