Date:

The article was first published in the September 2023 issue of the World Federation of Exchanges Focus Magazine.

‘Digital twins’ are known to be a reliable means of system simulation across industries: automotive, space, manufacturing – used to obtain valuable insights about a system’s quality, analyse its performance, and even prevent or remediate breakdowns.1 However, building digital twins for modelling complex transaction-processing systems such as exchanges, payment, clearing and settlement systems for the purpose of assessing their quality is an area explored less extensively. This article aims to bridge this gap and shine the well-deserved spotlight on the significance of simulation in fintech systems delivery.

‘Houston, we’ve had a problem.’

NASA is considered a pioneer in the use of the ‘living model,’ more well-known now as a system’s ‘digital twin’ – a term also introduced within the US space agency back in 2010. In an interview with Forbes, NASA’s manufacturing expert and manager of its National Center for Advanced Manufacturing (currently – Principal Technologist) John Vickers explained: “The ultimate vision for the digital twin is to create, test and build our equipment in a virtual environment.”2 In the 1960s, each NASA spacecraft was meticulously replicated in a physical counterpart3 (with limited digital capacities, due to the limited access to computational power available at the time) for the purposes of study, simulation, or forensic analysis. Such analysis, for instance, was of critical importance in the Apollo 13 Moon-bound journey. Having the model at hand helped remediate the oxygen tank explosion and subsequent damage to the main engine, saving the lives of the three astronauts inside.

A modern digital model strives to be an exact digital replica obtained via connecting the physical system to sensors and capturing the data they transmit. The advantages of investing in such simulations in safety-critical systems seem intuitive: a virtual spacecraft component malfunction vs suffering engine impact in a live launch, and a digital autonomous vehicle crash vs losing actual human lives to under-tested technology. Software defects meeting the financial market can, however, be none the less costly. Although, undoubtedly, less grave in nature, they are still capable of having a devastating effect on livelihoods, reputations, data safety and its security.

Models in Capital Markets Testing

What would be a fintech equivalent of an enhancement a digital twin can introduce to a trading infrastructure?

Any given exchange environment can require one to two hundred servers to stay operational. A model of the same environment may require just two servers to run the simulations covering all of the system’s quality assessment needs. Significantly optimised resources cut down the associated hardware footprint and costs.

Sufficiently thorough regression testing can require substantial time and effort. Using a model would provide a highly efficient version of the regression test library that leverages computing capabilities to produce and analyse diverse scenarios. Increased testing speed enables faster delivery, as well as promotes risk-informed decisions and better delivery strategies.

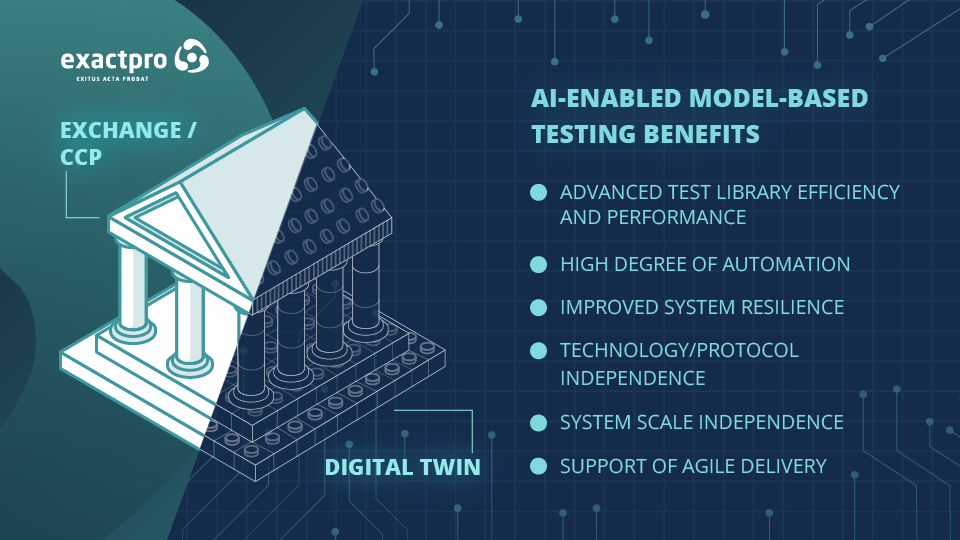

It is possible for a model to be built as the system matures, which, in line with agile software development methodologies, enables stakeholders to receive objective information about the system early in the development lifecycle and resolve most issues long before they become critical.

Due to its ability to unlock greater control over system components and functions, system modelling provides a fundamentally deeper extent of system analysis, compared to SLA-outlined requirements traceability matrices – more so with the use of AI.

The Synergy of Modelling and AI

Leveraging AI-enabled modelling in testing fintech systems helps transform the quality and size of the test libraries to achieve better efficiency, provide more effective test coverage of the system’s functionalities – resulting in better detection of potential vulnerabilities – and introduce advanced levels of automation. Providing ‘more effective test coverage’ using AI stands for applying sets of algorithms to generate and, subsequently, carefully reduce the array of test scripts to a subset that only contains a fraction of the original volume while representing the same level of test coverage. The resulting AI-generated and optimised test library is a more performant and less resource-heavy version of a traditional test library. The optimised library can be gradually appended with scripts covering the latest functionality. It can also be constantly revised and enriched with more elaborate scripts based on relevant information received from the system.

Due to the main analytical power of the model being siloed away from code, AI-enabled testing is applicable to a wide variety of use cases within the industry and beyond, and it is also system- and system-scale-agnostic. The amount of data the approach requires for training is not affected by the infrastructure size or level of technology maturity. Smaller-scale infrastructures may not possess enough internal/historical data for introducing machine learning; this is resolved by the approach’s capacity to generate synthetic data using the model’s predictive capacity.

More established infrastructures and well-oiled operations can also benefit from AI-enabled testing, which can help evaluate whether the current test coverage is sufficient and adequate to the complexity of the system under test.

In the context of ever-evolving technology infrastructures, system modelling optimises resources involved and provides unparalleled control over the analysis and exploration of the system’s behaviour due to the fact that the two systems essentially share the same DNA.

Identical vs fraternal

Or do they? Yes and no. Let’s suppose that the trading platform is predominantly coded in Java and C++. Ironically, the twin system coded in the same exact language may, in fact, lead to replication of the exact defects already lurking there. The same goes for the team in charge. Much like parents loving their offspring unconditionally, the team developing the system may be biased as to its faults, causing defects introduced into the system to be replicated in the model. This is also the reason why a previous version of the system alone cannot be used as its objective model (it does, however, carry limited regression testing value).4

It is an independent team’s ability to reproduce the system logic in a completely different technology stack – languages, toolset and approaches – that makes models and the approaches that underpin them worth their weight in gold.

AI-enabled Modelling – Deliver Better Software, Faster

When we talk about data in fintech – we keep in mind its sensitivity. At the same time, having no access to system data for testing purposes is similar to devising a treatment plan with no accurate diagnostics or participating in electronic trading with no access to market data. The ability to reconstruct and control dataflows via a model helps circumvent the access restriction issues that a live environment would indubitably impose. Built properly, a ‘digital twin’ will be a tool for generating expected outputs for infinitely many permutations and controlled variations of parameters/conditions, some of which would have remained undiscovered by human-only testing activities. Enhanced with generative algorithms, the approach helps produce realistic business-pertinent feature-rich scenarios simulating authentic trading participants’ actions. It also enables the test framework to truly match the complexity of the infrastructure under test. AI-enabled software testing is an effective industry-tested way to deliver better fintech software, faster.

References:

[1] David Cotriss, How AI is Supercharging Digital Twins, Nasdaq, 31 October 2022.

[2] Bernard Marr, What Is Digital Twin Technology - And Why Is It So Important?, Forbes, 6 March 2017.

[3] Michael Grieves, Physical Twins, Digital Twins, and the Apollo Myth, 8 September 2022.

[4] Test Automation for CCPs and Exchanges – Operational Day Replay Limitations, Exactpro, July 2020.